Benchmark ConvGru - 2020 April 14¶

[1]:

import datetime

import numpy as np

from matplotlib import pyplot as plt

import torch

import torch.nn as nn

import torch.optim as optim

from torch.utils.data import DataLoader, random_split

from tqdm import tqdm

import seaborn as sns

from tst.loss import OZELoss

from src.benchmark import BiGRU, ConvGru

from src.dataset import OzeDataset

from src.utils import compute_loss

from src.visualization import map_plot_function, plot_values_distribution, plot_error_distribution, plot_errors_threshold, plot_visual_sample

[2]:

# Training parameters

DATASET_PATH = 'datasets/dataset_CAPT_v7.npz'

BATCH_SIZE = 8

NUM_WORKERS = 4

LR = 1e-4

EPOCHS = 30

# Model parameters

d_model = 48 # Lattent dim

N = 2 # Number of layers

dropout = 0.2 # Dropout rate

d_input = 38 # From dataset

d_output = 8 # From dataset

# Config

sns.set()

device = torch.device("cuda:0" if torch.cuda.is_available() else "cpu")

print(f"Using device {device}")

Using device cuda:0

Training¶

Load dataset¶

[3]:

ozeDataset = OzeDataset(DATASET_PATH)

dataset_train, dataset_val, dataset_test = random_split(ozeDataset, (38000, 1000, 1000))

[4]:

dataloader_train = DataLoader(dataset_train,

batch_size=BATCH_SIZE,

shuffle=True,

num_workers=NUM_WORKERS,

pin_memory=False

)

dataloader_val = DataLoader(dataset_val,

batch_size=BATCH_SIZE,

shuffle=True,

num_workers=NUM_WORKERS

)

dataloader_test = DataLoader(dataset_test,

batch_size=BATCH_SIZE,

shuffle=False,

num_workers=NUM_WORKERS

)

Load network¶

[5]:

# Load transformer with Adam optimizer and MSE loss function

net = ConvGru(d_input, d_model, d_output, N, dropout=dropout, bidirectional=True).to(device)

optimizer = optim.Adam(net.parameters(), lr=LR)

loss_function = OZELoss(alpha=0.3)

Train¶

[6]:

model_save_path = f'models/model_LSTM_{datetime.datetime.now().strftime("%Y_%m_%d__%H%M%S")}.pth'

val_loss_best = np.inf

# Prepare loss history

hist_loss = np.zeros(EPOCHS)

hist_loss_val = np.zeros(EPOCHS)

for idx_epoch in range(EPOCHS):

running_loss = 0

with tqdm(total=len(dataloader_train.dataset), desc=f"[Epoch {idx_epoch+1:3d}/{EPOCHS}]") as pbar:

for idx_batch, (x, y) in enumerate(dataloader_train):

optimizer.zero_grad()

# Propagate input

netout = net(x.to(device))

# Comupte loss

loss = loss_function(y.to(device), netout)

# Backpropage loss

loss.backward()

# Update weights

optimizer.step()

running_loss += loss.item()

pbar.set_postfix({'loss': running_loss/(idx_batch+1)})

pbar.update(x.shape[0])

train_loss = running_loss/len(dataloader_train)

val_loss = compute_loss(net, dataloader_val, loss_function, device).item()

pbar.set_postfix({'loss': train_loss, 'val_loss': val_loss})

hist_loss[idx_epoch] = train_loss

hist_loss_val[idx_epoch] = val_loss

if val_loss < val_loss_best:

val_loss_best = val_loss

torch.save(net.state_dict(), model_save_path)

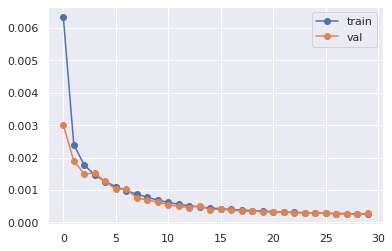

plt.plot(hist_loss, 'o-', label='train')

plt.plot(hist_loss_val, 'o-', label='val')

plt.legend()

print(f"model exported to {model_save_path} with loss {val_loss_best:5f}")

[Epoch 1/30]: 100%|██████████| 38000/38000 [07:40<00:00, 82.53it/s, loss=0.00635, val_loss=0.00301]

[Epoch 2/30]: 100%|██████████| 38000/38000 [07:40<00:00, 82.56it/s, loss=0.00241, val_loss=0.0019]

[Epoch 3/30]: 100%|██████████| 38000/38000 [07:40<00:00, 82.54it/s, loss=0.00177, val_loss=0.0015]

[Epoch 4/30]: 100%|██████████| 38000/38000 [07:40<00:00, 82.51it/s, loss=0.00147, val_loss=0.00152]

[Epoch 5/30]: 100%|██████████| 38000/38000 [07:39<00:00, 82.63it/s, loss=0.00126, val_loss=0.00126]

[Epoch 6/30]: 100%|██████████| 38000/38000 [07:40<00:00, 82.56it/s, loss=0.00111, val_loss=0.00103]

[Epoch 7/30]: 100%|██████████| 38000/38000 [07:40<00:00, 82.53it/s, loss=0.000981, val_loss=0.00103]

[Epoch 8/30]: 100%|██████████| 38000/38000 [07:40<00:00, 82.57it/s, loss=0.000876, val_loss=0.000755]

[Epoch 9/30]: 100%|██████████| 38000/38000 [07:40<00:00, 82.49it/s, loss=0.000778, val_loss=0.000698]

[Epoch 10/30]: 100%|██████████| 38000/38000 [07:40<00:00, 82.58it/s, loss=0.000688, val_loss=0.000631]

[Epoch 11/30]: 100%|██████████| 38000/38000 [07:40<00:00, 82.55it/s, loss=0.00062, val_loss=0.000549]

[Epoch 12/30]: 100%|██████████| 38000/38000 [07:40<00:00, 82.43it/s, loss=0.000561, val_loss=0.000497]

[Epoch 13/30]: 100%|██████████| 38000/38000 [07:41<00:00, 82.34it/s, loss=0.000514, val_loss=0.000461]

[Epoch 14/30]: 100%|██████████| 38000/38000 [07:40<00:00, 82.50it/s, loss=0.000478, val_loss=0.000513]

[Epoch 15/30]: 100%|██████████| 38000/38000 [07:40<00:00, 82.49it/s, loss=0.000447, val_loss=0.000399]

[Epoch 16/30]: 100%|██████████| 38000/38000 [07:40<00:00, 82.48it/s, loss=0.000424, val_loss=0.000407]

[Epoch 17/30]: 100%|██████████| 38000/38000 [07:41<00:00, 82.30it/s, loss=0.000401, val_loss=0.000382]

[Epoch 18/30]: 100%|██████████| 38000/38000 [07:40<00:00, 82.53it/s, loss=0.000381, val_loss=0.000346]

[Epoch 19/30]: 100%|██████████| 38000/38000 [07:41<00:00, 82.38it/s, loss=0.000365, val_loss=0.00035]

[Epoch 20/30]: 100%|██████████| 38000/38000 [07:40<00:00, 82.47it/s, loss=0.000351, val_loss=0.000329]

[Epoch 21/30]: 100%|██████████| 38000/38000 [06:04<00:00, 104.30it/s, loss=0.000335, val_loss=0.000313]

[Epoch 22/30]: 100%|██████████| 38000/38000 [03:08<00:00, 201.75it/s, loss=0.000323, val_loss=0.000329]

[Epoch 23/30]: 100%|██████████| 38000/38000 [03:07<00:00, 202.14it/s, loss=0.000313, val_loss=0.000291]

[Epoch 24/30]: 100%|██████████| 38000/38000 [03:07<00:00, 202.21it/s, loss=0.0003, val_loss=0.000302]

[Epoch 25/30]: 100%|██████████| 38000/38000 [03:07<00:00, 202.71it/s, loss=0.000294, val_loss=0.000298]

[Epoch 26/30]: 100%|██████████| 38000/38000 [03:07<00:00, 202.67it/s, loss=0.000284, val_loss=0.000279]

[Epoch 27/30]: 100%|██████████| 38000/38000 [03:07<00:00, 202.40it/s, loss=0.000276, val_loss=0.000265]

[Epoch 28/30]: 100%|██████████| 38000/38000 [03:07<00:00, 202.67it/s, loss=0.000272, val_loss=0.000265]

[Epoch 29/30]: 100%|██████████| 38000/38000 [03:07<00:00, 203.04it/s, loss=0.000265, val_loss=0.000248]

[Epoch 30/30]: 100%|██████████| 38000/38000 [03:07<00:00, 202.93it/s, loss=0.000258, val_loss=0.000281]

model exported to models/model_LSTM_2020_04_14__101819.pth with loss 0.000248

Validation¶

[7]:

_ = net.eval()

Evaluate on the test dataset¶

[8]:

predictions = np.empty(shape=(len(dataloader_test.dataset), 168, 8))

idx_prediction = 0

with torch.no_grad():

for x, y in tqdm(dataloader_test, total=len(dataloader_test)):

netout = net(x.to(device)).cpu().numpy()

predictions[idx_prediction:idx_prediction+x.shape[0]] = netout

idx_prediction += x.shape[0]

100%|██████████| 125/125 [00:01<00:00, 82.73it/s]

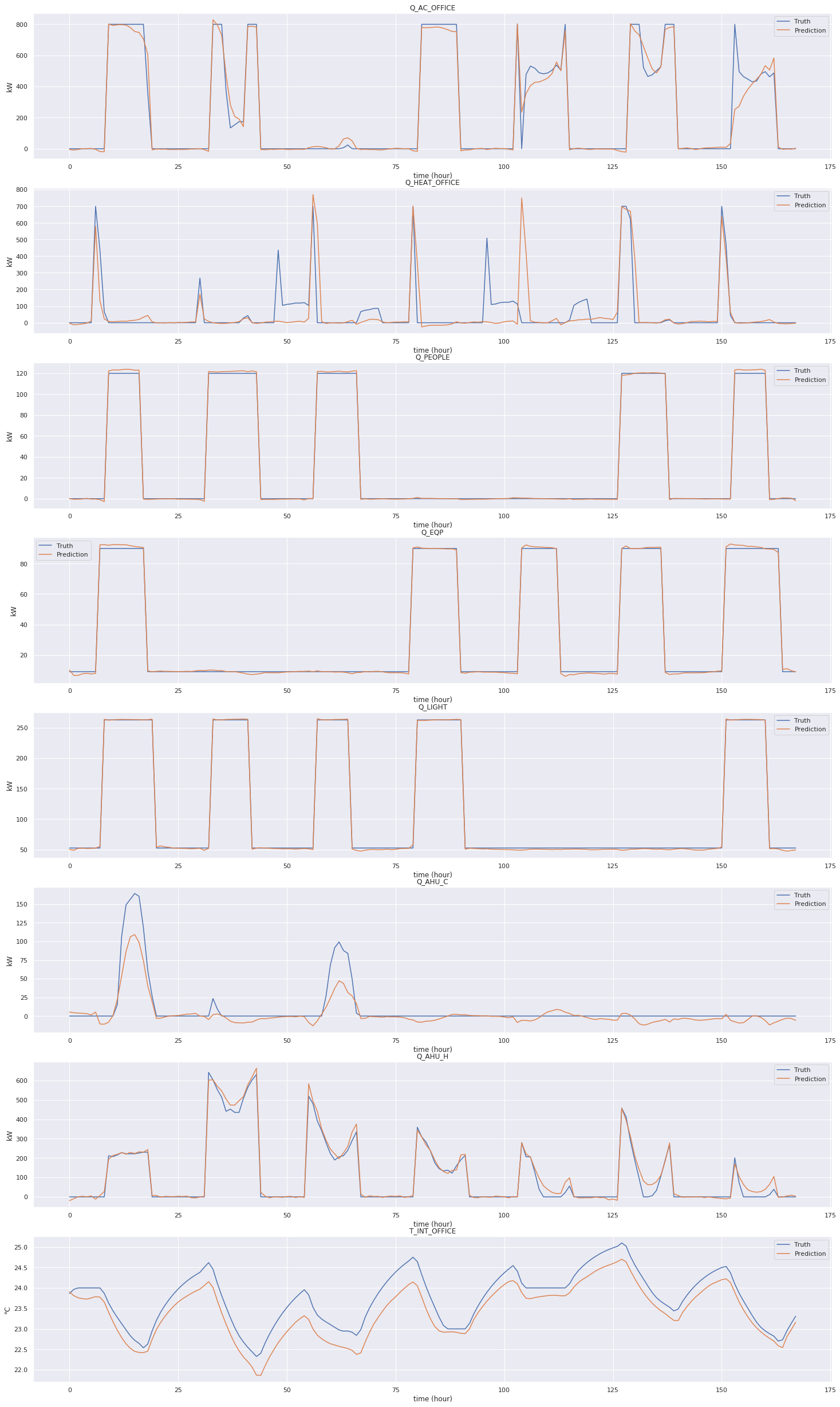

Plot results on a sample¶

[9]:

map_plot_function(ozeDataset, predictions, plot_visual_sample, dataset_indices=dataloader_test.dataset.indices)

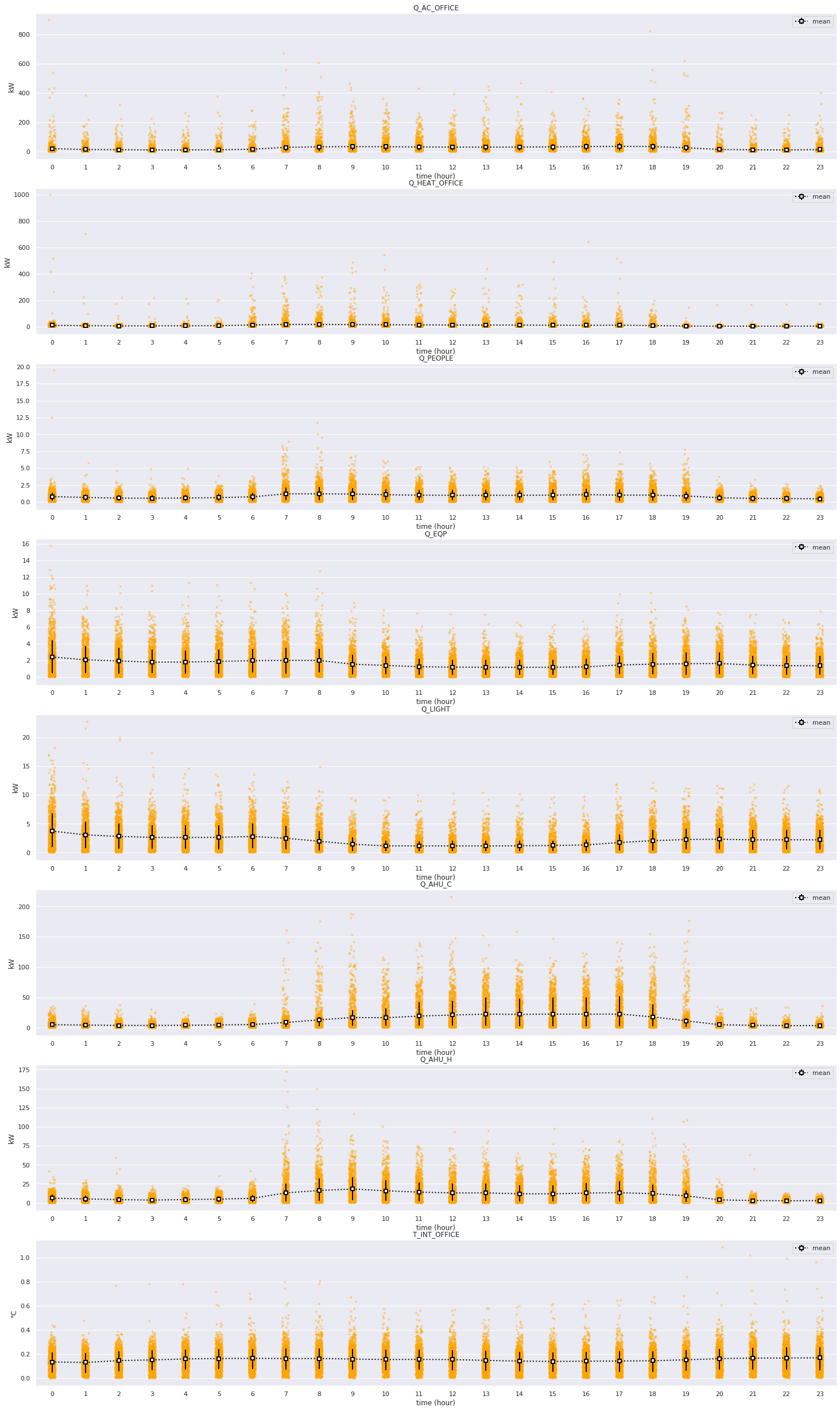

Plot error distributions¶

[10]:

map_plot_function(ozeDataset, predictions, plot_error_distribution, dataset_indices=dataloader_test.dataset.indices, time_limit=24)

Plot mispredictions thresholds¶

[ ]:

map_plot_function(ozeDataset, predictions, plot_errors_threshold, plot_kwargs={'error_band': 0.1}, dataset_indices=dataloader_test.dataset.indices)